by

Think about the last time you struggled to make a purchase, either in-store or online. Perhaps the checkout line at the grocery store was way too long, or you kept getting an error message every time you tried to checkout from an online store.

Chances are this either negatively affected your customer experience, or you may have even abandoned your shopping cart and left the store altogether.

A study by Vistaprint found that 42% of customers are unlikely while 21% said they are “not likely at all” to purchase from a poorly designed or unprofessional website. If that’s the case, then one of your top priorities should be to build an ecommerce site that ensures a quality buyer experience on the frontend.

Of course, a good user experience isn’t just about designing a pretty website — it’s about all the seen and unseen components of your website working together to help the customer easily navigate your website and complete their shopping journey as efficiently as possible. And as the number of consumer touchpoints grows, you’ll need to understand how the frontend and backend of your ecommerce website function both separately and together to create a seamless omnichannel experience. In this blog post, we’ll differentiate between frontend and backend ecommerce, how you can separate them in a headless tech stack and common technologies for both.

Ecommerce Frontend vs. Backend Technology

Think of the frontend as the digital storefront of an ecommerce site. It encompasses all the parts of the store that the customer engages with, such as site design, fonts, colours, images and product pages.

As the client-side of the website, frontend technology is all about creating a functional and engaging customer experience. Frontend developers often manage this side of the website using programming languages like HTML, JavaScript and CSS.

Backend technology, on the other hand, is the server-side of an ecommerce website. Behind the scenes, the backend holds all the data, such as products, prices, order details, and customer information and transfers it to and from the frontend. The web server, application server and database make up the backend and allow the frontend to not only look good but also function properly.

3 Pillars of Frontend Ecommerce.

When designing the frontend of your website, there are three key components that need to be considered. Although these pillars apply to whatever digital sales channel you’re working with, they’re especially important when it comes to creating a successful online shop.

1. UX.

User experience — aka UX — is all about how a customer views and interacts with an online shop. Although UX is not limited to just ecommerce, when it comes to your website, creating a memorable user experience is crucial to turning viewers into customers.

While UX errors such as nonresponsive page design or confusing navigation may seem minor, if they add up over time, they can lead customers to abandon your website completely. Here are some examples of great UX design from our own BigCommerce merchants.

Filter on product listings

On Big R’s online shop, you filter products by brand, price, in-stock, style and more, and you can select more than one product filter at the same time. Each filter displays the number of available products, and the filters are also dynamic, meaning that you select Under Armour as a brand filter, but there aren’t any long-sleeve shirts, then the site won’t display that style filter. This means no error message or blank screen at any point in the process.

Purchase Process

According to a study by Baymard, 69.8% of shoppers abandon the process after already putting items in their shopping cart — however, by improving checkout design, the average large-sized ecommerce store can increase their conversion rate by 35%.

If the customer is ready for checkout, it’s likely that they don’t want the distraction of ads, popups or creating an account. Rather, they want to focus on making a payment and completing their purchase.

In this example by Bearpaw, the steps of the purchase process are simple and clear, and besides a chat box in the lower right hand corner, there are no distractions to deter the customer from completing their purchase.

Shorter payment process

Your checkout process should be quick, easy and have as few clicks as possible. Adding extra steps to the process only increases the number of opportunities for the shopper to get bored and abandon your website.

Luckily, BigCommerce offers optimized one-page checkout, which minimises friction and simplifies the payment process to increase conversions. Plus, merchants can enable shipping and billing address autocomplete, which removes the tedious step of filling in personal information.

Rock Bottom Golf is a great example of how to shorten the payment process, as they offer address autocomplete as well as multiple payment options, including PayPal and Affirm, which allows the customer to connect their payment accounts for an even faster checkout in the future.

2. Performance.

Performance is closely tied to UX, since page speed and functionality of your web application also affect the shopper experience.

In fact, “the first five seconds of page load time have the highest impact on conversion rates,” and conversion rates decrease by 4.42% with every additional second of load time. If that’s the case, then your site performance may make or break you.

One key component of page load are the images on your website. The issue with images is that the larger the image, the slower the page load. However, if you up your SEO, you may risk reducing the quality of your images. Luckily, there are several minifying tools to help optimize your photos and find a happy medium.

Additionally, here are some suggested KPIs from Common Places to monitor and help determine where your performance is excelling or lacking:

Measure your audience reach and impact.

Analyse traffic sources.

Measure average session time and bounce rate.

Identify conversion rates.

Measure ROI and profits.

3. Design.

A five-year study by McKinsey, covering 300 companies across multiple geographies and industries, found that the companies who prioritised site design outperformed those who didn’t and grew at a considerably faster rate. This goes to show that how your site looks and functions truly matters to shoppers.

First impressions are crucial. Imagine browsing through an online store with a messy layout, mismatched fonts and blurry images — chances are it won’t take long for you to exit out and find a better-looking site.

Here are some tips to help improve your design and make a good first impression.

Design for the user experience: Make sure your website is user friendly — for both desktop and mobile — making it easy for the user to navigate from one page to the next. Include CTAs that clearly show the next step in the buyer journey so that the customer can have the most seamless experience possible.

Maintain a consistent brand and design: Whether a user is browsing your social media page, online store or app, they need to see consistency across all channels. Make your brand unique and easily recognisable so that you can stand out from the crowd.

Focus on colour palette: Colours are one of the first things a customer notices about your brand, so it’s important that you put some thought into choosing colours that personify your brand and will attract your target audience. Research Colour Theory, which explains how different colours can convey different meanings and feelings, and try to choose complementary colours when designing your website.

Benefits of a Headless Tech Stack

Many traditional, monolithic ecommerce platforms connect their frontend and backend technologies in a tightly coupled system. But while these ecommerce platforms might make it easy for merchants to build an online store, these systems simply don’t have the flexibility that is necessary for customising a website and incorporating necessary features.

This is where headless commerce saves the day.

A headless approach to ecommerce means that you can decouple the frontend presentation layer and backend ecommerce functionality, allowing them to work independently and communicate using APIs. This means that merchants can integrate with multiple technologies and choose the frontend experience that makes the most sense for your business, whether that be WordPress, Drupal, Adobe Experience Manager, Bloomreach, Joomla or Sitecore. And all the while, the ecommerce platform powers the backend commerce functionality.

But the frontend isn’t the only layer that can be customized. With a headless approach, the backend can be split into modules, which removes complexity and allows you to adapt more quickly to digital disruptions.

Benefits of Headless Frontend Ecommerce

1. Safer upgrades.

Since the frontend and backend function independently, a headless approach gives you the freedom to make agile changes to either layer without having to adjust the entire system. Thus, developers can quickly react to market trends and make changes to the frontend while avoiding costly backend development.

2. More personalization options.

Since the frontend is no longer dependent on the backend, you can use whatever frontend experience or CMS that works best for you. Rather than relying on your ecommerce platform to do the work for you, you can customize your storefront to suit the preferences of your brand and your audience, integrating all the necessary applications and functionalities necessary for your business.

3. Easier integrations.

A headless approach enables the backend to function across multiple touchpoints, such as smartphones, smartwatches, smart speakers and digital signage. Powerful APIs connect the two layers to ensure consistent, customized experiences across various channels.

4. Greater flexibility.

The best thing about a headless approach is that you’re never stuck where you’re at. If you get tired of a particular frontend or backend technology, you’re not tied to it! Headless gives you the freedom to test out different platforms and features until you find the ones that work best for you.

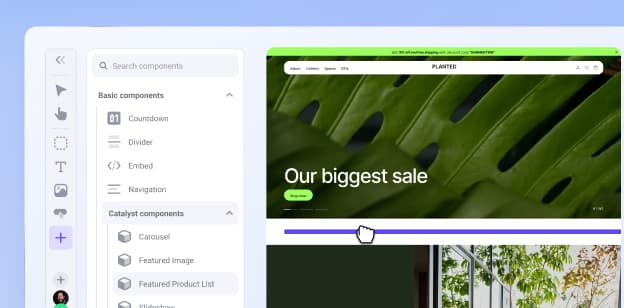

Explore Catalyst, the future of storefront experience.

Experience the storefront that's setting a new standard for modern commerce, designed for your whole team to love.

Common Pain Points of Choosing a Frontend

Of course, when deciding on which frontend to use for your website, there are a couple pain points to keep in mind.

1. Ability to integrate with your tech stack.

Ensuring that your frontend and backend work together is crucial for the success of your online store. While backend developers can work without a frontend, not all frontend developers can work without a backend, so you’ll need to ensure that your frontend services will integrate with your ecommerce solution.

2. Ensuring your frontend has a good user experience.

As you continue to grow and develop your frontend, you’ll need to continuously monitor whether additional features or adjustments are degrading the user experience. Although it can be a hassle, running unit tests, integration tests and reporting tests is one of the best ways to ensure that the user experience is consistent.

Frontend Ecommerce Technologies

Since the frontend is the layer that the user will see and interact with, it’s key that UI designers and UX engineers choose the solution that not only makes their website look good but also function at its best.

From scripting languages to design tools, these technologies work together to build an online store that loads quickly, catches the eye and adapts well to all devices. Luckily, nowadays, many web browsers like Chrome and Firefox already have smart scripting languages integrated into their software, so plugins aren’t often needed.

Let’s take a look at some of the most commonly used frontend ecommerce technologies.

HTML.

HTML (Hypertext Markup Language) is the standard markup language for web applications, because it acts as a building block for text and images. It defines the meaning and structure of web pages and informs the web browser how to display the content.

JavaScript.

Once you’ve covered the basics of HTML, you can start adding interactivity to your website using JavaScript. This is a dynamic programming language that enables users to create interactive games, animation, 2D and 3D graphics and so much more. With an expert JavaScript developer on your web development team, you’ll be able to liven up the user experience and add a dimension of creativity to your online shop.

AngularJS.

Using HTML as the template language, AngularJS (also called “Angular”) is a structural framework for dynamic web apps. The job of AngularJS is to change HTML from static to dynamic and extend its syntax to display your website’s components more clearly.

Node.Js.

An open-source, server-side runtime, Node.Js is designed for developing scalable network applications. It uses JavaScript and a group of modules to provide an event-driven, asynchronous, cross-platform runtime environment.

ReactJS.

ReactJS (or simply “React”) is an open-source JavaScript library that allows developers to create dynamic user interfaces for single-page web apps. It specifically manages the presentation layer for desktop and mobile applications and helps provide a cohesive user experience.

VBScript.

VBScript (Visual Basic Script) was created by Microsoft and is a client-side scripting language similar to JavaScript. Its purpose is to enhance site functionality and develop dynamic web pages that are more interactive and lightweight.

AJAX.

AJAX (Asynchronous JavaScript And XML) is a tool for building more efficient and interactive web applications. It allows web developers to asynchronously update web pages without reloading the page, increase web application responsiveness and read data from the web server even after the page has loaded.

JQuery.

JQuery is a fast, lightweight library that makes it simpler to use JavaScript in your applications. With a user-friendly API that works across multiple browsers, JQuery allows developers to write fewer lines of JavaScript while still accomplishing the same number of tasks.

Responsive Web Design.

Responsive Web Design (RWD) is a design approach that allows for optimal viewing experiences for websites and webpages across all devices and screen sizes. Since users often switch between desktop, mobile and other screen sizes, RWD helps developers adapt their applications to suit the needs of the user wherever they are.

Vue.js.

Vue.js is a progressive JavaScript framework for building user interfaces and single-page applications. Known for its fast learning curve, Vue.js is incrementally adoptable, allowing developers and can easily be integrated with other libraries.

Backend Ecommerce Technologies

In order for the frontend presentation layer to provide a quality user experience, it must be supported by the backend commerce functionality. These are some of the most common backend technologies that can help build the skeleton of your ecommerce website.

Saas solutions.

SaaS (Software-as-a-Service) is a subscription-based software service, much like how you would pay a monthly fee for renting a house. Rather than having to download or buy the SaaS platform yourself, it’s instead hosted by the SaaS provider, who manages the security, maintenance and upkeep of the platform.

On top of that, these are just a few of the many benefits of adopting a SaaS solution:

Fast setup.

Ease of use.

Scalability.

Customer support.

Unfortunately, with many SaaS platforms, you won’t have the ability to alter the source code, which means limited customization. However, BigCommerce takes a unique approach by offering an Open SaaS solution, which means that merchants get all the benefits of a SaaS solution — ease of use, high-performance and scalability — combined with platform-wide APIs, so that you can customize your site and integrate all the necessary external applications and functionalities.

PHP.

PHP is an open-source, general-purpose scripting language popularly used in ecommerce web development. It can be integrated in HTML and is free to download and use. Many open-source ecommerce platforms such as Magento and Volusion are built using PHP.

Python.

Python is a popular, general-purpose programming language used to construct web applications, analyse data and automate tasks. Its syntax is simpler than PHP and has a highly readable code, so this may be a better option for developers who are just starting out.

Java.

Java is a general-purpose, object-oriented programming language designed for having fewer implementation dependencies, and it’s used across various applications including gaming systems, social media platforms, audio and video applications.

MySQL.

MySQL is a relational open-source database management system (RDBMS), which is a software that manages databases based on a relational model. Since ecommerce websites often depend on lots of content stored in a database, it’s vital that all data is managed and rendered properly.

PostgreSQL.

A powerful, open-source RDBMS, PostgreSQL expands the SQL language and combines additional features such as a full-text search and messaging system to scale complex workloads.

ASP.Net.

ASP.Net is an open-source, cross-platform framework that developers can use to create dynamic web applications and services. It’s an extension of the .NET developer platform that combines tools, programming languages and libraries to build various types of apps.

The Final Word

With competition heating up in the ecommerce industry, creating a website that stands out is crucial for success. But a website that stands out is one that both looks good on the frontend and functions properly on the backend.

While these two layers may be independent, especially in a headless approach, the key is to make them work together to create the most optimal user experience and site performance possible. Now that you know the most common frontend and backend technologies, you’ll be well on your way to building an ecommerce site that best serves your customers and scales your business.